You can tell a lot from how a story starts. If you hear “Once upon a time …” you’ll probably hear a fairy tale like “The Three Little Pigs” or “The Little Red Hen”. Around camp fires, kayakers like to tell stories that begin with “No kidding, there I was …” and a tale of heart thumping excitement and harrowing escapades of misfortune or lucky escape. In software development stories often begin (or end) with “I’m serious. You can’t make up stuff like this.”1

I was flipping through an old work note book and came across the following story.

I spent three days working with a client who had adopted Scrum for project management about six months earlier. On the fourth day I attended a Sprint planning two (user stories to task with time estimates) meeting. As we talked about stories and sizes, George asked the following question. “How do we deal with work that is too big to finish in a sprint?” In all my time coaching teams, I haven’t found a user story so large that it couldn’t be done in a sprint. If there is such a story, it usually an epic that can be further divided into smaller stories.

Wanting to be helpful I said, “Well, split the story into smaller stories.” Mentally allowing that I haven’t seen all the possible user stories in the world (and there /MIGHT/ be one story so large it couldn’t be finished in a sprint) I continued … “If that doesn’t work, pull the work into the sprint and burn down as much as possible. You don’t get velocity points, but if the backlog is properly ordered you’ll have work done for the start of the next sprint.” Hoping to point to some future perfect day where the team was burning down faster than anticipated I added, “It’s just like when the sprint burns down faster than estimated. You pick the next story from the Product Backlog and start work on it.”

George replied “We’d never do that. We get graded on how well we complete our stories. If we have unfinished work it counts against us.”

The Fundamental Software Development Process

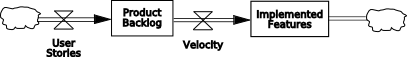

The fundamental software development process looks like:

This system dynamics drawing2 varies somewhat from the Diagram of Effects (aka Causal Loop Diagram) I often use by showing the stocks (levels) and flows (rates) associated with the development process. User stories flow into the Product Backlog. The team converts the user stories into Implemented Features at some rate (Velocity). By and large it’s how everyone develops software. Steps may have different names and take more time to complete (or not) but we take what user’s want and give them software that performs those actions.

An important aspect of the Fundamental Software Development Process involves recognizing it’s an open loop system and open loop systems are inherently stable. User stories come in as they will and become part of the Product Backlog. The team works at some natural development speed based on their abilities, the story complexity, and their intrinsic motivation. The Implemented Features accumulate and eventually the software gets released to the users. Based on the Product Backlog size (in story points) and the team’s velocity (in story points) it’s possible to calculate when the next releasable set of Implemented Features will be ready to go to the users.

But what if that date isn’t soon enough?

Add Some Feedback

The conversation with George happened my last day on site and I had meetings stacked the rest of the day. But on the flight home I started wondering, “Why would someone want to ‘grade’ the teams on how well they completed the stories?” One answer is to build confidence in team’s velocity value. Another possible explanation would be a behavioral assumption that the developers tend to avoid work and a way to make sure the developers don’t shirk is to compare the sprint results with the estimates made during the sprint planning.

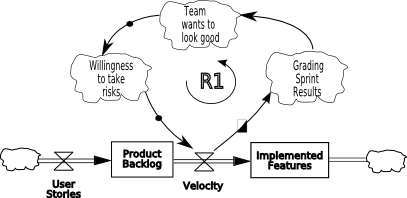

Whatever the reason for “grading” the sprints, the action of “grading” has created a feedback loop in the system. Adding feedback means taking a system output and sending that output (or some portion there of) back into the system input. I wrote about feedback control loops in Multi-Use Model. Feedback loops provide the opportunity for control, aiming the system at some new target such as a new delivery date. Unfortunately feedback also provokes instability in the system. Unless great care is taken, the system becomes dysfunctional at best and destructive at worst. The Fundamental Development Process now looks like:

The “split” rectangle means management has a choice at this point. They can choose to “grade” sprint results or not. If the grading happens, the rest of the reinforcing loop happens. In a nutshell:

- Team members want to look good. I don’t know if “counts against us” includes performance evaluation, but it could.

- Since the team wants to look good, they’re not likely to take risks. Not taking risks could mean:

- Not bringing additional work if they finish early.

- Inflating story points for user stories during estimating sessions.

- Since the team won’t take risks, measured velocity won’t decrease (and indeed may increase) while actual velocity (the rate of delivering implemented features) may decrease. As Robert Austin notes:

Boomerang Measurement

I confess I’m doing a certain amount of mind reading now since I didn’t have a chance to talk with the person who decided grading the sprint results would be a “good thing”. But I can’t wrap my mind around the concept that they explicitly set out to slow the development process. But they did, we have another boomerang measurement.

Truth be told, I believe the person thought they were (are?) doing the best possible actions to help the developers be more efficient. I’m serious. You can’t make up stuff like this.

For more information on how management goes astray with measurements I recommend reading Measuring and Managing Performance in Organizations by Robert Austin and Slack, Getting Past Burnout, Busywork, and the Myth of Total Efficiency by Tom DeMarco.

Got an example of a boomerang measurement? Drop me a note.

1I first heard this line from Jerry (Gerald M.) Weinberg

2http://en.wikipedia.org/wiki/System_dynamics

3Measuring and Managing Performance in Organizations Dorset House Publishing, ISBN 0-932633-36-6, page 38